Motion Planning has been quite an intriguing topic for me since I’ve first learned about its fundamentals with a course I’ve taken in Fall 2015 which was one of the first Robotics courses I have ever taken. Learning for the first time about the representations such as the Configuration Space and how you could sample it taking the collisions or similar constraints into consideration, and how you could construct dot graphs within those spaces to find a collision-free path through the robot’s workspace were ‘stimulating’ with the elegance and simplicity of these widely applicable solutions. Going deeper into the topic with my research work in the following period, a sub-component of the topic known as Trajectory Generation/Planning, where the goal is to connect the dots in the graphs this time, turned out to be even more of an interesting subject. Since then I have been working on this topic actively with class projects and my research project at Kovan Research Laboratory, mostly aiming to find methods in generating as ‘natural’ trajectories as possible.

In the literature, there lots of approaches towards generating natural motions for various robots ranging from point robots to humanoids. Many of these methods uses high-degree polynomials minimizing the jerk and the accelerations in the trajectory in order to create as smooth a motion as possible, and some other use approaches such as Dynamic Movement Primitives to represent the ‘texture’ of the motions with as little parameters as possible towards finding a generalization for the trajectories to be generated. Still, a comprehensive solution to generating natural motions in a generalized fashion is yet to be invented, and probably us not having a comprehensive definition for ‘natural motion’ is not helping too.

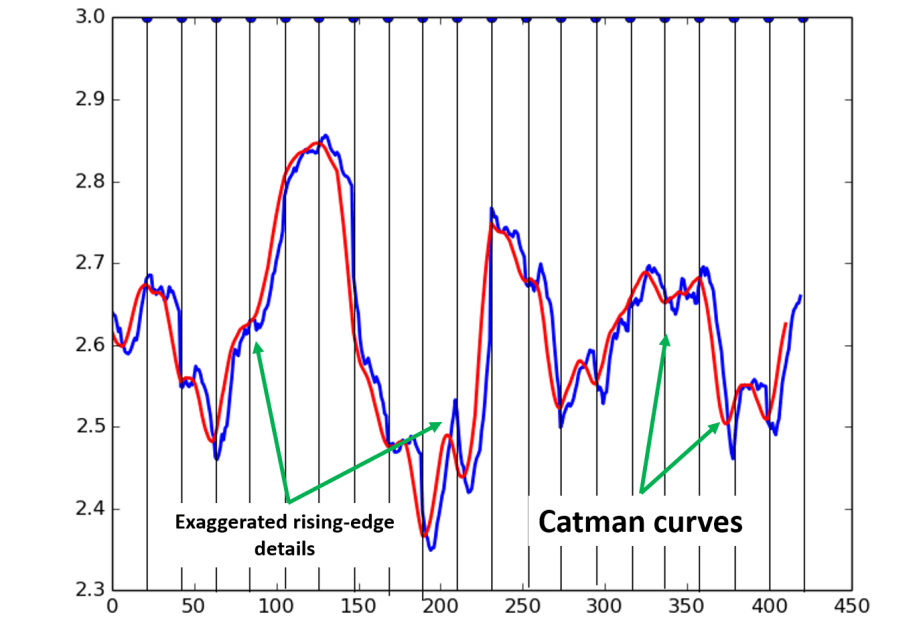

For the project of another class I have taken which was on Pattern Recognition (yet another first), I have decided to implement a part of the ideas I had on the topic for a while, and in the end with my project partner we were able to have an even smaller part of these ideas actually work. The initial idea is simple: To train and use a neural network to learn the context of motions from an actual natural motion data set such as this one. This way I believed, and still do, that we could not only get a network that can generalize the motions from and point A to any point B, but also help carry and project the emotions/attitudes/characteristics of the donor organism through motions. To clarify this messy sentence, you could simply imagine the network getting trained by observing motions of a toddler or an elder. The outputs of the network then could be used to control a (e.g.) robot arm that moves carrying the motion characteristics of an elderly person or a small child. Or a bird, a fish… Whatever the donor data has to offer. We have worked with a MoCap database of human motions, and parsed elbow joint angles from this data to train our network. In the end we were able to generate one degree-of-freedom trajectories using this network, and it was really interesting to be able to replicate an unexpected recurring property we have observed in the data set: The Catman Curves as we have referred to them after on. Unfortunately, the data and the visuals I have extracted from this work has been lost with an external HDD thanks to a very careless friend with the habit of dropping things. I have tried to explain what these curves are with a demonstrative image and its caption below.

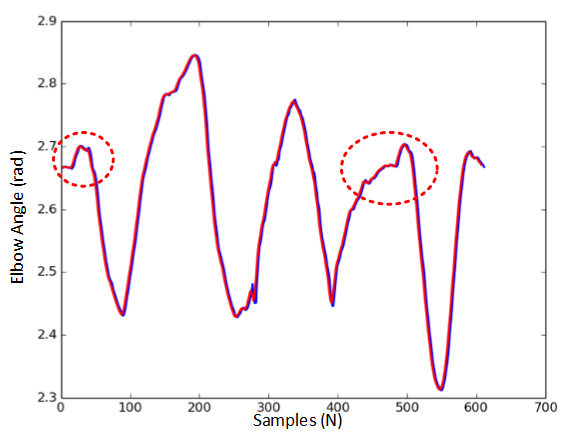

Talking about catching the definitive characteristics of motion, such detail was very important to observe, and being able to observe similar details with the outputs of the trained network was even more interesting and motivating to work more on the subject. Below is a elbow angle trajectory parsed from an actual human motion data and a trajectory we have created with our network. Unfortunately, again, this is all I could scavenge on this work from an unfinished presentation backup.

Upon completion of the semester, this work was halted with the initial/conceptual results due to the lack of time. I am looking forward for the first opportunity to jumping back on this study and continue on working with multi-DoF application of the network to begin with. Until then, the Catman will have to wait.